Regulatory Landscape: FDA Clearance

In the rapidly evolving world of medical technology, “innovation” is a buzzword we hear daily. Startups and tech giants alike tout revolutionary algorithms and breakthrough models. However, in healthcare, innovation without validation is merely potential. The bridge between a clever algorithm and a life-saving tool is built on regulation.

For Artificial Intelligence (AI) in medicine, the gold standard of validation is clearance by the U.S. Food and Drug Administration (FDA). This regulatory milestone is not just a badge of honor; it is the fundamental gatekeeper for clinical adoption of AI. It separates experimental concepts from trusted medical devices.

As we integrate AI prostate cancer tools like ProstatID™ into standard urological and radiological care, understanding the regulatory landscape is crucial. Why does FDA clearance matter so much? What hurdles must be cleared to achieve it? And how does this rigorous process translate into trust for the doctor and safety for the patient?

The Significance of the FDA Seal of Approval

The FDA does not hand out clearances lightly. For a medical device—especially software that assists in diagnosing cancer—the scrutiny is intense. When a device receives FDA clearance (specifically 510(k) clearance for many AI tools), it means the agency has reviewed the evidence and determined that the device is “substantially equivalent” to a legally marketed predicate device, or effectively safe and effective for its intended use.

Beyond the Hype

In the tech world, software is often released in “beta” versions, with the philosophy of “move fast and break things.” In medicine, “breaking things” means harming patients. You cannot release a beta version of a cancer detection tool.

FDA clearance signals that the “move fast” mentality has been tempered by rigorous scientific method. It tells the medical community that the claims made by the manufacturer are not just marketing fluff but are backed by data. For FDA clearance AI diagnostics, this validation is the difference between a research project and a clinical product.

Categorizing Risk

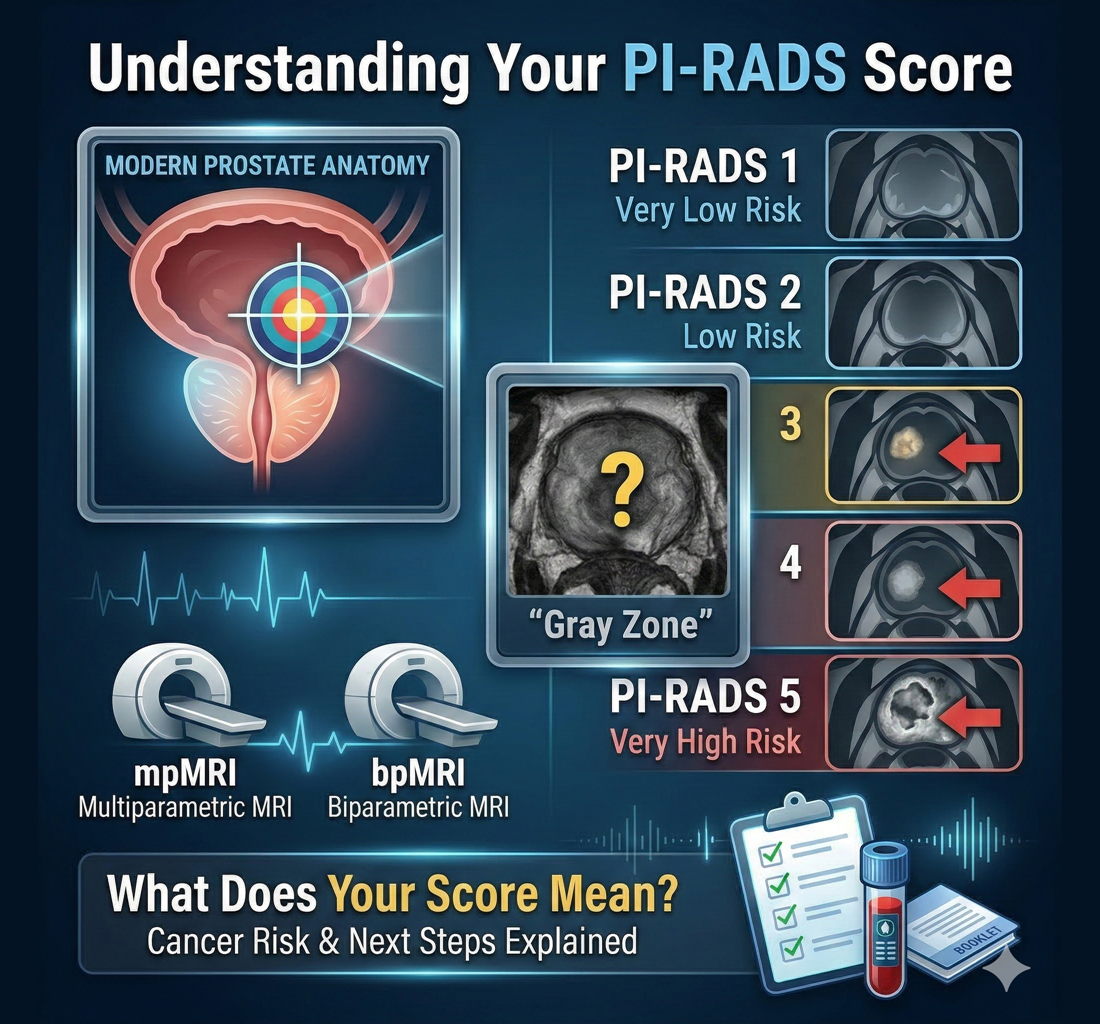

The FDA categorizes medical devices based on risk. Class I devices are low risk (like bandages). Class III are high risk (like pacemakers). Most AI diagnostic software falls into Class II. This category acknowledges that while the software isn’t implanted in the body, an incorrect output—like missing a cancer diagnosis—carries significant risk to the patient.

Navigating the requirements for Class II clearance forces AI developers to prove not just that their code works, but that it works consistently, safely, and effectively in the hands of the intended users (radiologists and urologists).

To appreciate the value of FDA-cleared technology, one must understand the gauntlet developers must run to achieve it. It is a multi-year process involving massive investments of time, money, and intellectual rigor.

1. Training vs. Validation Data

One of the biggest regulatory hurdles for AI is data hygiene. An algorithm can easily memorize its training data—this is called “overfitting.” It’s like a student who memorizes the answers to a practice test but fails the real exam.

To get FDA clearance, developers must prove their model generalizes well. They must strictly separate the data used to teach the AI from the data used to test it. The FDA requires robust “stand-alone” performance testing on datasets the AI has never seen before. This ensures that the AI prostate cancer tools will perform accurately on new patients in the real world, not just in the lab.

2. Multi-Site Clinical Studies

Medical imaging varies wildly. An MRI scan from a top-tier university hospital using a brand-new 3 Tesla machine looks very different from a scan taken at a rural clinic on an older 1.5 Tesla magnet.

For FDA clearance, you cannot just test your AI on perfect images. You must demonstrate that it works across this spectrum of variability. This often requires multi-site clinical reader studies. In these studies, radiologists read cases with and without the AI assistance. The data must prove that the doctors perform better with the AI than without it.

This “reader study” requirement is critical. It shifts the focus from “how accurate is the computer?” to “how much better does the computer make the human?” This is the core metric for clinical adoption of AI.

3. The “Black Box” Problem

Regulators (and doctors) are wary of “black box” algorithms—models that give an answer without explaining how they got there. If an AI says “Cancer detected,” but cannot show where or why, it is clinically useless and regulatorily dangerous.

The FDA pushes for explainability and transparency. Tools like ProstatID™ don’t just output a probability score; they provide visual segmentation, highlighting the specific pixels and regions of interest. This transparency is key to regulatory success because it keeps the physician in the loop, allowing them to verify the AI’s findings.

Regulatory clearance is the legal requirement, but trust is the operational requirement. A hospital can buy a piece of software, but if the doctors don’t trust it, they won’t use it. FDA clearance is the foundation upon which that trust is built.

The Liability Shield

For a radiologist, every diagnosis carries liability. If they miss a cancer, they can be sued. If they call a false positive that leads to sepsis from a biopsy, they can be liable.

Using a non-FDA-cleared tool for diagnosis is a massive legal risk. It opens the physician to claims that they used experimental or unproven methods. Conversely, using an FDA-cleared device provides a layer of protection. It demonstrates that the physician is practicing evidence-based medicine using validated tools. This liability mitigation is a huge driver for the clinical adoption of AI in large healthcare systems.

Consistency and Standardization

Trust also comes from consistency. Clinicians need to know that the tool will behave predictably. The FDA’s Quality System Regulation (QSR) mandates that medical device manufacturers have rigorous controls over their software development lifecycle.

Every update, every patch, and every change to the algorithm must be documented and validated. This prevents “code creep” where an algorithm changes in unexpected ways over time. When a urologist sees the FDA clearance mark, they know the software is subject to these strict quality controls, ensuring that the results they see today are as reliable as the results they saw yesterday.

You can see the real-world results of this consistency by visiting our Discover Our Impact page, where we highlight how reliable data changes patient outcomes.